Data sharing between organizations for commercial purposes has been around for over 100 years. But until very recently, enterprises have been forced to rely on traditional data sharing methods that are labor-intensive, costly and error-prone. These methods are also more open to hackers and produce stale data. Snowflake Data Sharing, one of the newest innovations to Snowflake’s cloud-built data warehouse, has eliminated those barriers and enabled enterprises to easily share live data in real time via one-to-one, one-to-many and many-to-many relationships. Best of all, the shared data between data providers and data consumers doesn’t move.

Below is an example of how Snowflake Data Sharing reduced the time to create a live, secure data share to a fraction of the time and cost of a standard method. Most interestingly, the application of Snowflake Data Sharing in this instance reveals that the solutions addressed by modern data sharing are endless.

The Challenge

The Federal Risk and Authorization Management Program (FedRAMP), “is a government-wide program that provides a standardized approach to security assessment, authorization, and continuous monitoring for cloud products and services.” Complying with the program’s approximately 670 security requirements and collecting supporting evidence is a significant challenge. But having to do so monthly, as required, is a Sisyphean task if you attempt it manually. Cloud providers must complete an inventory of all of their FedRAMP assets, which include, binaries, running network services, asset types and more. Automation is really the only logical approach in solving this FedRAMP inventory conundrum.

The Run-of-the-mill Method

Often, people gravitate to what’s familiar so it’s no surprise we initially considered a solution that comprised the combination of an AWS tool, an IT automation tool and some Python to clean up the data. However, we estimated a significant effort to develop, test, and deploy the code, which is separate from the required, ongoing maintenance. Instead, we took a different approach.

The Solution

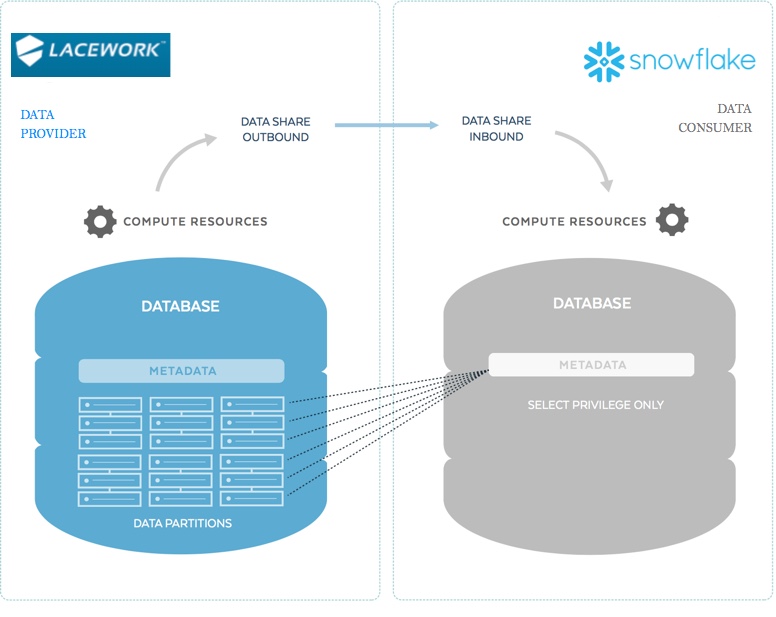

Snowflake’s Data Sharing technology is a fast, simple and powerful solution that allows us to maintain our security governance posture while sharing live data in real time without having to move the data. With modern data sharing, there is the concept of a data provider and a data consumer. For this project we engaged Lacework, a Snowflake security partner, as our initial data provider.

Lacework provides us with advanced threat detection by running their agents on our servers to help capture relevant activities, organize events logically, baseline behaviors, and identify deviations. In doing so, Lacework looks for file modifications, running processes, installed software packages, network sockets, and monitors for suspicious activities in one’s AWS account. All of that data is then analyzed and stored in their Snowflake account. Lacework is both a Snowflake vendor and a Snowflake data warehouse-as-a-service customer. They use Snowflake to provide their security services to Snowflake. Basically, Lacework already collects the data required to complete the FedRAMP system inventory collection task.

We contacted Lacework and presented them with our FedRAMP challenge and suggested leveraging data sharing between their Snowflake account and our Security Snowflake account. Within a couple of hours, they provided us with live FedRAMP data through Snowflake Data Sharing. Yes, you read that right. It only took a couple of hours. The following describes the steps for creating, sharing, and consuming the data:

Data Provider (Lacework) steps

- Create a share and give it a name

- Grant privilege to the database and its objects (schemas, tables, views)

- Alter share to add other Snowflake accounts to the share

Data Consumer (Snowflake Security) steps

- Create Database from the share

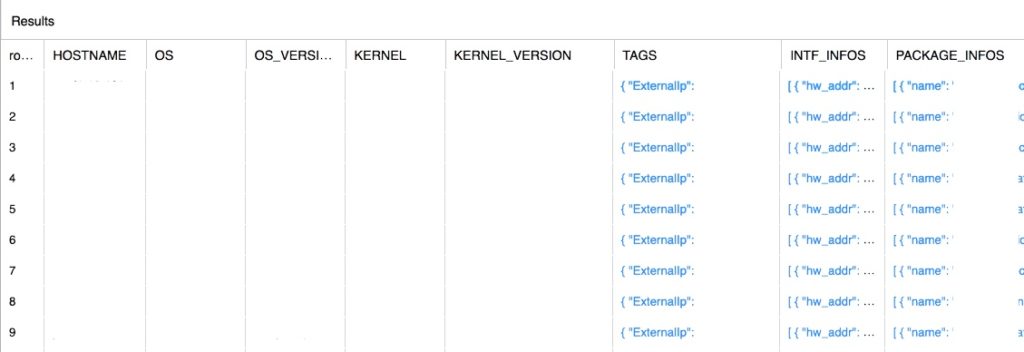

- Perform some SQL commands and voila! We have our FedRAMP System inventory data (we redacted the results in this image for security reasons)

Again, the whole data sharing effort took just a few hours.

Beyond FedRAMP

You are probably asking yourself at this point, “why didn’t you just ask Lacework to generate a FedRAMP report instead doing data sharing?” I would wholeheartedly agree with you if we were dealing with a conventional data warehouse built with a 90’s philosophy of share nothing. But Snowflake is the farthest thing from a conventional data warehouse and data sharing is nothing short of transformational. How so?

In addition to consuming data from Lacework, we also consume data from other data providers that share application logs, JIRA cases and more. We combine these data sources to automatically track software packages to determine if they are approved or not. Before data sharing, this activity was a time-consuming, manual process. Now, the team is free to focus on more critical security activities since data sharing has helped with FedRAMP and has improved our overall security posture.

Conclusion

As I wrote this blog, I watched my kids enthusiastically playing with their new Lego set. It’s remarkable how simple blocks can form such complex structures. Modern data sharing displays similar extrinsic properties because it offers data consumers and data providers with infinite ways of solving challenges and thus creating boundless business opportunities.